HA ZFS NFS Storage

I have described in this post how to setup RHCS (Redhat Cluster Suite) for ZFS services, however – this is rather outdated, and would work with RHEL/Centos version 6, but not version 7. RHEL/Centos 7 use Pacemaker as a cluster infrastructure, and it behaves, and configures, entirely differently.

This is something I’ve done several times, however, in this particular case, I wanted to see if there was a more “common” way of doing this task, if there was a path already there, or did I need to create my own agents, much like I’ve done before for RHCS 6, in the post mentioned above. The quick answer is that this has been done, and I’ve found some very good documentation here, so I need to thank Edmund White and his wiki.

I was required to perform several changes, though, because I wanted to use IPMI as the fencing mechanism before using SCSI reservation (which I trust less), and because my hardware was different, without multipathing enabled (single path, so there was no point in adding complexity for no apparent reason).

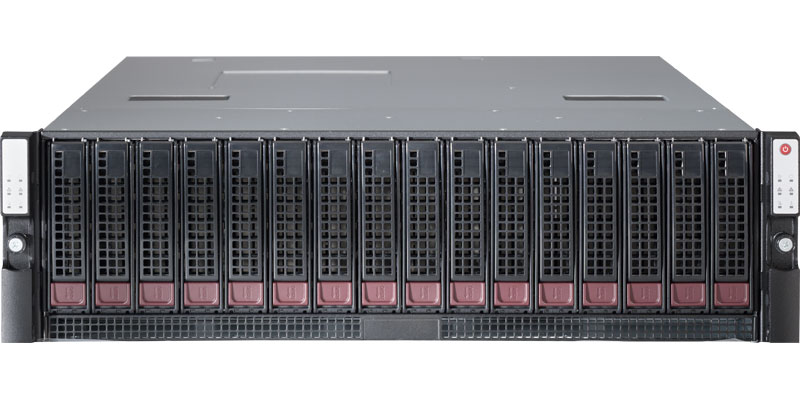

The hardware I’m using in this case is SuperMicro SBB, with 15x 3.5″ shared disks (for our model), and with some small internal storage, which we will ignore, except for placing the Linux OS on.

For now, I will only give a high-level view of the procedure. Edmund gave a wonderful explanation, and my modifications were minor, at best. So – this is a fast-paced procedure of installing everything, from a thin minimal Centos 7 system to a running cluster. The main changes between Edmund version and mine is as follows:

- I used /etc/zfs/vdev_id.conf and not multipathing for disk names aliases (used names with the disk slot number. Makes it easier for me later on)

- I have disabled SElinux. It is not required here, and would only increase complexity.

- I have used Stonith levels – a method of creating fencing hierarchy, where you attempt to use a single (or multiple) fencing method(s) before going for the next level. A good example would be to power fence, by disabling two APU sockets (both must be disconnected in parallel, or else the target server would remain on), and if it failed, then move to SCSI fencing. In my case, I’ve used IPMI fencing as the first layer, and SCSI fencing as the 2nd.

- This was created as a cluster for XenServer. While XenServer supports both NFSv3 and NFSv4, it appears that the NFSD for version 4 does not remove file handles immediately when performing ‘unexport’ operation. This prevents the cluster from failing over, and results in a node reset and bad things happening. So, prevented the system from exporting NFSv4 at all.

- The ZFS agent recommended by Edmund has two bugs I’ve noticed, and fixed. You can get my version here – which is a pull request on the suggested-by-Edmund version.

yum groupinstall "high availability"

yum install epel-release

# Edit ZFS to use dkms, and then

yum install kernel-devel zfs

Download ZFS agent

wget -O /usr/lib/ocf/resource.d/heartbeat/ZFS https://raw.githubusercontent.com/skiselkov/stmf-ha/e74e20bf8432dcc6bc31031d9136cf50e09e6daa/heartbeat/ZFS

chmod +x /usr/lib/ocf/resource.d/heartbeat/ZFS

systemctl disable firewalld

systemctl stop firewalld

systemctl disable NetworkManager

systemctl stop NetworkManager

# disable SELinux -> Edit /etc/selinux/config

systemctl enable corosync

systemctl enable pacemaker

yum install kernel-devel zfs

systemctl enable pcsd

systemctl start pcsd

# edit /etc/zfs/vdev_id.conf -> Setup device aliases

zpool create storage -o ashift=12 -o autoexpand=on -o autoreplace=on -o cachefile=none mirror d03 d04 mirror d05 d06 mirror d07 d08 mirror d09 d10 mirror d11 d12 mirror d13 d14 spare d15 cache s02

zfs set compression=lz4 storage

zfs set atime=off storage

zfs set acltype=posixacl storage

zfs set xattr=sa storage

# edit /etc/sysconfig/nfs and add to RPCNFSDARGS "-N 4.1 -N 4"

systemctl enable nfs-server

systemctl start nfs-server

zfs create storage/vm01

zfs set [email protected]/24,async,no_root_squash,no_wdelay storage/vm01

passwd hacluster # Setup a known password

systemctl start pcsd

pcs cluster auth storagenode1 storagenode2

pcs cluster setup --start --name zfs-cluster storagenode1,storagenode1-storage storagenode2,storagenode2-storage

pcs property set no-quorum-policy=ignore

pcs stonith create storagenode1-ipmi fence_ipmilan ipaddr="storagenode1-ipmi" lanplus="1" passwd="ipmiPassword" login="cluster" pcmk_host_list="storagenode1"

pcs stonith create storagenode2-ipmi fence_ipmilan ipaddr="storagenode2-ipmi" lanplus="1" passwd="ipmiPassword" login="cluster" pcmk_host_list="storagenode2"

pcs stonith create fence-scsi fence_scsi pcmk_monitor_action="metadata" pcmk_host_list="storagenode1,storagenode2" devices="/dev/sdb,/dev/sdc,/dev/sdd,/dev/sde,/dev/sdf,/dev/sdg,/dev/sdh,/dev/sdi,/dev/sdj,/dev/sdk,/dev/sdl,/dev/sdm,/dev/sdn,/dev/sdo,/dev/sdp" meta provides=unfencing

pcs stonith level add 1 storagenode1 storagenode1-ipmi

pcs stonith level add 1 storagenode2 storagenode2-ipmi

pcs stonith level add 2 storagenode1 fence-scsi

pcs stonith level add 2 storagenode2 fence-scsi

pcs resource defaults resource-stickiness=100

pcs resource create storage ZFS pool="storage" op start timeout="90" op stop timeout="90" --group=group-storage

pcs resource create storage-ip IPaddr2 ip=1.1.1.7 cidr_netmask=24 --group group-storage

# It might be required to unfence SCSI disks, so this is how:

fence_scsi -d /dev/sdb,/dev/sdc,/dev/sdd,/dev/sde,/dev/sdf,/dev/sdg,/dev/sdh,/dev/sdi,/dev/sdj,/dev/sdk,/dev/sdl,/dev/sdm,/dev/sdn,/dev/sdo,/dev/sdp -n storagenode1 -o on

# Checking if the node has reservation on disks - to know if we need to unfence

sg_persist --in --report-capabilities -v /dev/sdc

Here is the list: